Colophon

A dad on paternity leave spent months thinking and two weeks not sleeping to turn a weekend photo gallery into a serverless photography platform with 9 Lambdas, zero client-side data fetching, and a self-healing CI/CD pipeline. Here's how.

1. The Problem

I just wanted to put photos on the internet. How hard could it be?

I didn't even know what a "source set" was. My mental model was: upload JPEG, display JPEG. That's likely why I failed my interview at Instagram a decade ago. I say that mostly in jest.

I knew I couldn't return a bunch of 20 MB JPEGs to the client. Please hold while we eagerly download six above-the-fold images at 20 MB each; we'll be with you in 3-5 business days. But beyond that obvious problem, I knew nothing about large file optimization, hot-swapping images of varying qualities as they download, or blurhashes. I knew I didn't know this stuff, and that was at least a start.

What I did know: I wanted complete control over the experience. Control over the bytes on the wire, control over how images load, control over every pixel of the presentation. I had very little faith that something like Squarespace would give me that.

So I did what any reasonable person would do.

2. The Weekend Project

March 22-23, 2025. Two days hackathon.

While my wife was away at her ladies-only baby shower, I spent every waking hour of that weekend slinging code by hand (the old-school way, before I became a Claude Code power user). In less than 24 hours I had spengy.com live, and by the next day all of my pictures were uploaded to ImageKit. Voila, v1 (or maybe v0.1) was alive.

I just needed to get something out there. But I knew this was never the final vision.

The commit messages from that weekend tell you everything you need to know:

"Junking all the astro bits"

"HI HANNA." (immediately reverted. don't ask.)

"I hate css."

Fast-forward one year and we've arrived at something I never planned for: a full photography platform with an 16-page admin UI for editorial management, drag-and-drop image organization, a custom image processing pipeline with blurhash generation and EXIF extraction, full-text search across every tag and metadata field, an analytics dashboard built on SQL queries over columnar data, a search debugger, pipeline execution monitoring, and a maintenance mode kill switch. I would have been happy with a gallery that loaded fast. Before we get into how all of that came to be, the hackathon result had some critical deficiencies.

3. The Struggle

April-May 2025. Image optimization hell.

I had, in retrospect, implemented an extremely suboptimal mechanism for computing a blurhash. Those are the placeholder backgrounds that show a sort of gradient or color impression of the image while it loads. Mine took about a minute to compute. Per image. One single image.

I was out of time to optimize, so to upload images I had to limit how many blurhashes we generated directly in the code, then commit, build, deploy, and repeat. Over and over. It was hyper-tedious. Sure, Netlify build caches are a reasonable option, but those caches only stay warm for short periods, so it was always a cold start.

The image optimization saga was its own bloodbath:

"Maybe this will work."

"Revert 'Maybe this will work.'"

"DUDE WTF"

"Revert 'Fixing image stacking.'"

Beyond that, I had no mechanism to add metadata to an image. No ability to sort them. No way to move images around. I had achieved my aim of getting something out into the world with the little bit of time I had while Hanna was pregnant. But it was damn hard to use, and it perpetually nagged at the back of my mind.

4. The Silence

June 2025 - February 2026.

"I stopped coding. I never stopped thinking."

Over the past year, anytime my mind drifted, this was one of the places it went. I architected this platform in my head (and through various conversations with Gemini) during walks, during feedings, during all the in-between moments of new parenthood. The git history tells the story: a handful of commits in October and November, then silence.

The best thinking happens away from the keyboard.

5. The Two-Week Sprint

March 8-21, 2026. 320 commits. 14 days. 133 PRs.

After about a week of planning sessions and architecting with Claude Code, I was ready to start slinging code again. And I was racing the clock.

It truly felt like work. The kind where you have a deadline you're committed to delivering on, because once paternity leave ends, free time shrivels to minuscule amounts. Not only did I need to deliver the platform, I needed to deliver it in a way that guaranteed it could be maintained with minimal effort going forward. This is a side project, after all. I can't be on call for my own photography site.

In 14 days: 320 commits, 9 Lambda functions, 1,437 tests, ~24,000 lines of TypeScript, and an entire admin UI. All between diaper changes, at odd hours of the night when I definitely should have been sleeping, and during naps (the baby's, not mine).

The commit messages from the sprint read like a fever dream:

"ITS WORKING!" March 10, the imgproxy breakthrough

"MAMA DIDNT RAISE NO QUITER" March 13, after 3 days fighting Sharp on arm64

"use the right image (I am so dumb omg)" also March 13

"OMG DUDE." March 17, Lighthouse

"STILL FIXING PLAYWRIGHT." March 16

"Still squishing." March 20, evolved from squashing to squishing

The Self-Maintaining Platform

Once I'm off paternity leave, I'm not going to have time to babysit dependency updates. So the platform needed to maintain itself. I built a CI/CD pipeline with automatic library upgrades that self-heal when they break: Claude Code running in a GitHub Action, fixing its own CI failures.

Dependency updates arrive automatically every weekend. CI runs the full suite: 1,437 tests, 9 arm64 Docker builds, Terraform validation, Lighthouse scores, and Playwright E2E. If CI fails, a GitHub Action feeds the failure logs to Claude Code, which diagnoses the issue, applies the minimum fix, and pushes. CI re-runs. If Claude's fix didn't work (loop detection prevents infinite retries), it flags the failure for manual intervention.

I don't really need to do anything.

6. How It Actually Works

The Write Flow

"Drop a photo in S3. Everything else happens."

The S3 key path is the content hierarchy: photos/{section}/{collection}/{id}.ext. Upload a photo to the right path and the entire platform reacts.

One S3 upload triggers the entire chain. The file gets validated, metadata gets extracted in parallel (AI tagging of images via Rekognition, Sharp for dimensions and blurhash, ExifReader for all the camera nerd stuff) then it's written to DynamoDB and appended to a Parquet file for analytics. DynamoDB Streams keep the search index in sync automatically. I didn't even have to think about search during the write pipeline, which is probably the thing I'm most proud of architecturally.

Step Functions turned out to be the best decision I made early on. Need a new processing step? Add a state to the JSON, point it at a Lambda, done. Retries, error routing, parallel execution. All handled. The pipeline grew from 3 steps to 8 during the sprint, and honestly each addition took minutes. I kept waiting for it to get hard and it just never did.

The Read Flow

"Static HTML. Zero client-side data fetching. Blazing fast."

Every page on spengy.com is prerendered at build time. There's exactly one server-rendered page (search, because you can't pre-build every possible query). The browser gets fully rendered HTML with inline CSS gradients as image placeholders. The only client-side JavaScript (171 lines total, I counted) handles keyboard shortcuts, search overlay animations, and scroll-reveal effects. No API calls. No hydration. Zero client-side rendering.

CloudFront sits in front of four Lambda functions (CMS, search, analytics, imgproxy), each specialized for its query pattern. Every Lambda verifies the full RS256 JWT signature before doing any work.

OK, the thing I can't stop telling people about: the blurhash pipeline. Most galleries decode blurhash in the browser. That's ~8KB of JavaScript on every page load for what amounts to a blurry rectangle. We decode it at build time into a CSS linear-gradient() that's inlined directly in the HTML:

// Image.astro — this runs during `astro build`, never in the browser

const placeholderCss = blurhash

? `background-image: ${blurhashToCssGradientString(blurhash)}; background-size: cover;`

: undefined;No JavaScript. No placeholder images. No hydration. The browser renders the gradient instantly from static HTML, and the real image fades in over it.

Single Region, By Choice

The entire backend lives in us-west-2. No failover region, no multi-region replication. That sounds reckless until you think about what actually serves traffic.

Every page except search is static HTML served from Netlify's edge. The API only gets called during astro build. So if us-west-2 goes down after a deploy? The site keeps serving. Nobody notices. I didn't plan it this way for resilience reasons. It's just a side effect of static rendering. But I'll take it.

Image processing gets an extra layer of resilience from CloudFront Origin Shield, which acts as a cross-datacenter shared cache in front of imgproxy. Once an image variant is generated, it's cached at the shield tier and then at every edge location that requests it. The Lambda rarely gets hit twice for the same transformation.

That leaves search as the only feature with a runtime dependency on us-west-2. And search is a bonus. The gallery works perfectly without it. If Meilisearch goes down for an hour, you lose the search overlay. You don't lose a single photo. That tradeoff felt right for a side project that costs $5/month.

If I ever need multi-region, the path is there. DynamoDB Global Tables for content replication, and since search indexing already runs off DynamoDB Streams, a second region's stream would just keep its own Meilisearch in sync. But realistically? I'm not going to need it. This is a photography site, not Netflix.

7. The 11-Day CLI

I built a full terminal UI with Ink and React and then I threw it away. 25 screens. System status, content management, analytics, collection creation, a full upload workflow with S3 progress tracking. 83 files. 9,895 lines. It lived for exactly 11 days.

Then I replaced it with an Astro + React admin panel. 16 pages and 59 API endpoints. In one commit: "Ditching CLI and replacing with admin UI as well as patching server issues."

The success story here isn't the CLI. It's that it worked until it didn't, and then I had zero attachment to it. Claude wrote all of that code. When the CLI hit its limits, I pointed Claude at it and said "make this a web app." First try. Nailed it. Sometimes the best code is the code you delete.

8. Build the Tools to Build the Thing

I wanted to use PayloadCMS. Code-first, define your schema in TypeScript, get an admin UI for free. What's not to love? But it needs a Postgres instance. $14/month for the smallest RDS. For a photography site where I'd be thrilled to get ten visitors a day it isn't justified for the cost.

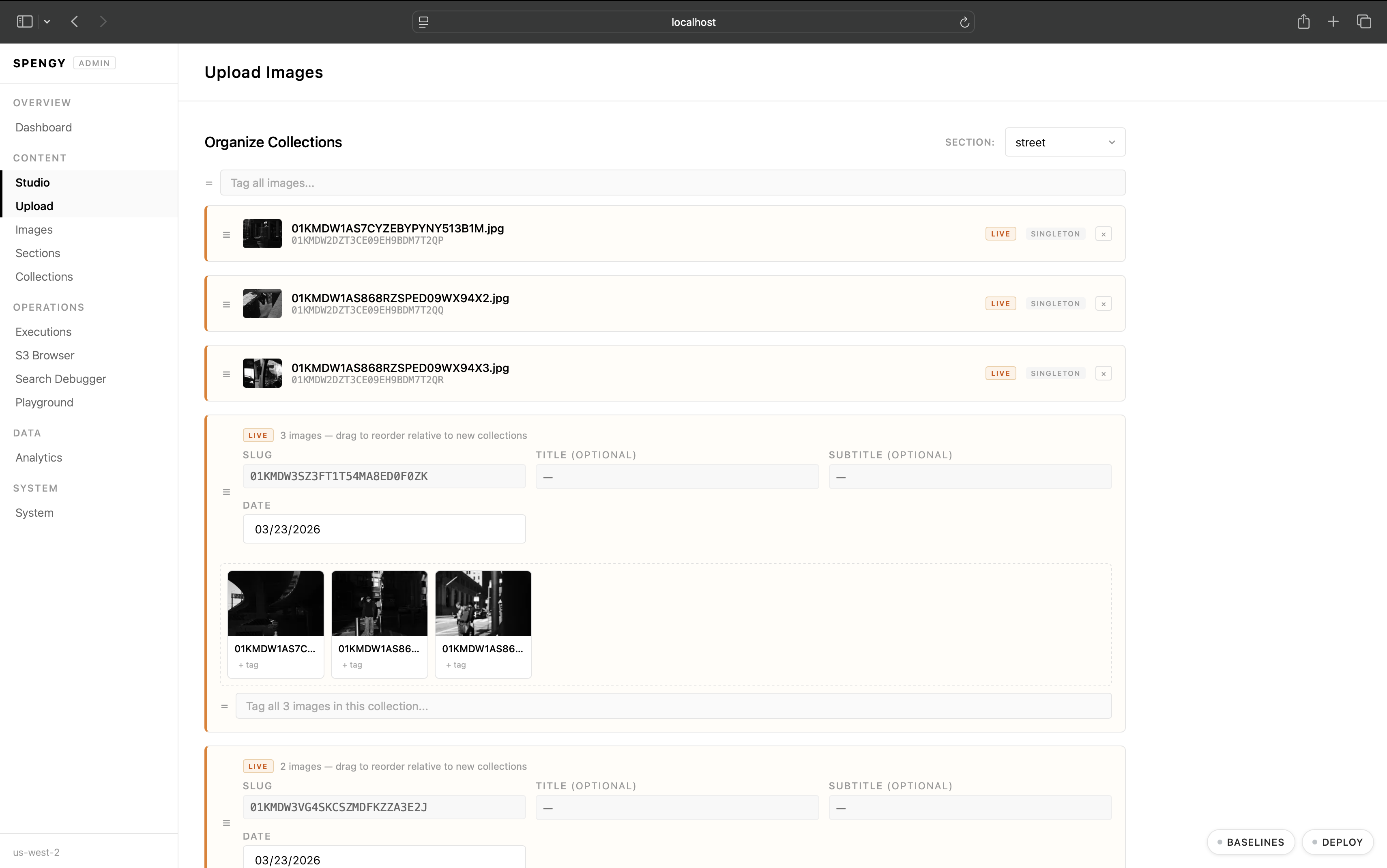

So I built my own. A few Lambdas, four DynamoDB tables (on-demand billing, basically free at my traffic), and a 16-page admin UI that provides full editorial control: drag-and-drop image organization, collection metadata editing, gallery previews using the exact same components as the public site, pipeline monitoring, search debugging, and a maintenance mode kill switch.

I built a whole UI to build my UI.

The whole platform runs for about $5/month on AWS. To replicate it with paid SaaS (image CDN, search, CMS, analytics), you'd be looking at $200+/month on paid tiers. Even cobbling together free tiers, you'd still lose the custom EXIF analytics dashboard, and the fine-grained control over every pixel of the gallery and every step of the image processing pipeline. The RDS instance alone for PayloadCMS would have cost nearly three times my entire monthly bill.

My philosophy, and the thing I really want to come through here: if what I'm building is hard to build, then I don't have the right tools to build it yet. So I step back and build the tools to make that product an absolute success.

9. Why Bother?

Why build all this when Squarespace exists?

Because owning your work matters. Not renting shelf space on someone else's platform. Actually owning it. The images, the metadata, the layout, the experience, the infrastructure. Every pixel, every byte, every cache header. I realize that sounds obsessive. It probably is.

But for me the engineering isn't separate from the photography. It's part of it. Building the platform is as much a creative act as taking the photos. The platform is the frame, and I wanted to build my own frame.

I could have shipped photos on Squarespace in an afternoon. Instead I built a serverless CQRS platform with 9 Lambdas, a self-healing CI/CD pipeline, and a custom CMS. Between diaper changes, during 3am feedings, in the quiet hours of paternity leave when the house was still and the baby was finally sleeping and I definitely should have been too.

I did it because someday my daughter is going to ask what her dad does. And I want to show her something I made with my own hands. Not a Squarespace template with my name on it. Something I poured my soul into during the hardest, most sleep-deprived, most meaningful months of my life. Every photo of her first year, hosted on infrastructure her dad built from scratch. That matters to me.

"She may not look like much, but she's got it where it counts, kid."